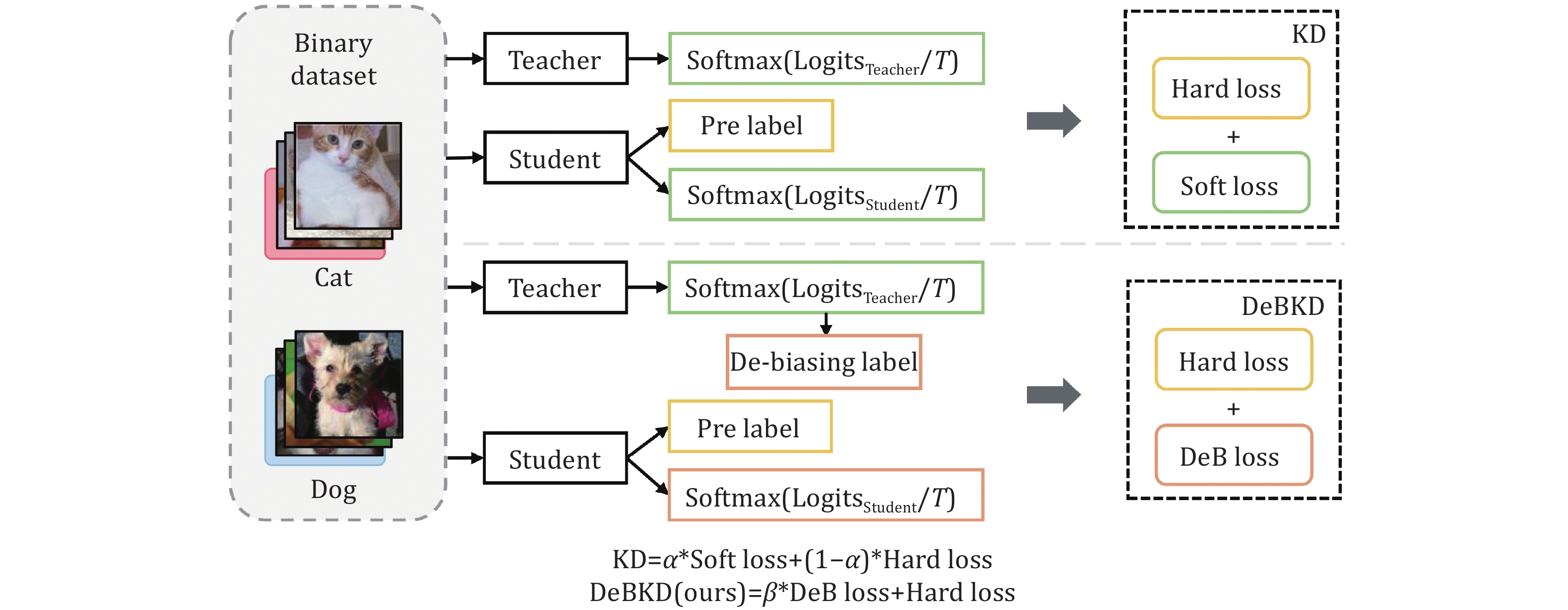

Yan Li, Tai-Kang Tian, Meng-Yu Zhuang, Yu-Ting Sun. De-biased knowledge distillation framework based on knowledge infusion and label de-biasing techniques[J]. Journal of Electronic Science and Technology, 2024, 22(3): 100278

Search by keywords or author

- Journal of Electronic Science and Technology

- Vol. 22, Issue 3, 100278 (2024)

Abstract

| (1a) |

View in Article

| (1b) |

View in Article

| (2) |

View in Article

| (3) |

View in Article

| (4) |

View in Article

| (5) |

View in Article

| (6) |

View in Article

| (7) |

View in Article

| (8) |

View in Article

| (9) |

View in Article

Set citation alerts for the article

Please enter your email address